1 A Mental Model of Thought

1.1 How Humans Think

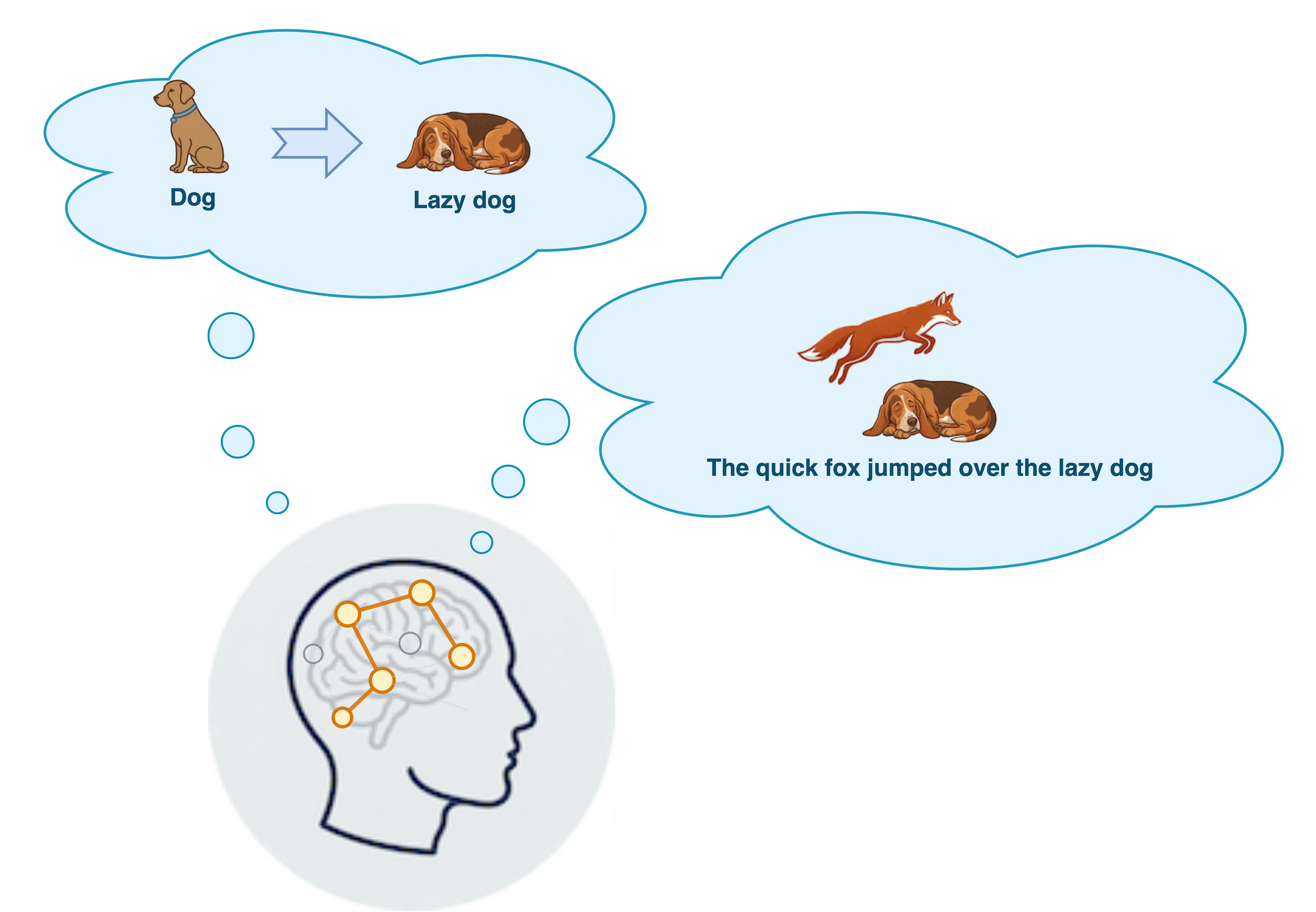

In a low-level, mechanical sense, we humans “think” by mapping different abstract semantic concepts — different meanings — to specific patterns of activations in our brain’s neurons. We have seen dogs and foxes, and we can conjure some representation of these animals within our minds. We can compute on these representations, say by imagining a scene involving one jumping over the other. We can compose a narrative about them. We can somehow transform the representations to add or alter details.

The brain produces an endless sequence of patterns of neurons firing, and somehow we have a mind that

- can make sense of patterns across many billions of neurons,

- has been sculpted by some organizing principle that brings structure to the patterns, and

- can compose all these different semantic concepts in complex, remarkably expressive ways.

As we will see, these properties are also true of the transformer.

The human brain represents abstract concepts as patterns of neurons firing, and it can compose and transform these representations. We don’t need to understand the low-level details of a brain’s neural patterns, the key feature is this ability to change or combine the patterns to create new meanings.

1.2 Thought Transmission via Language

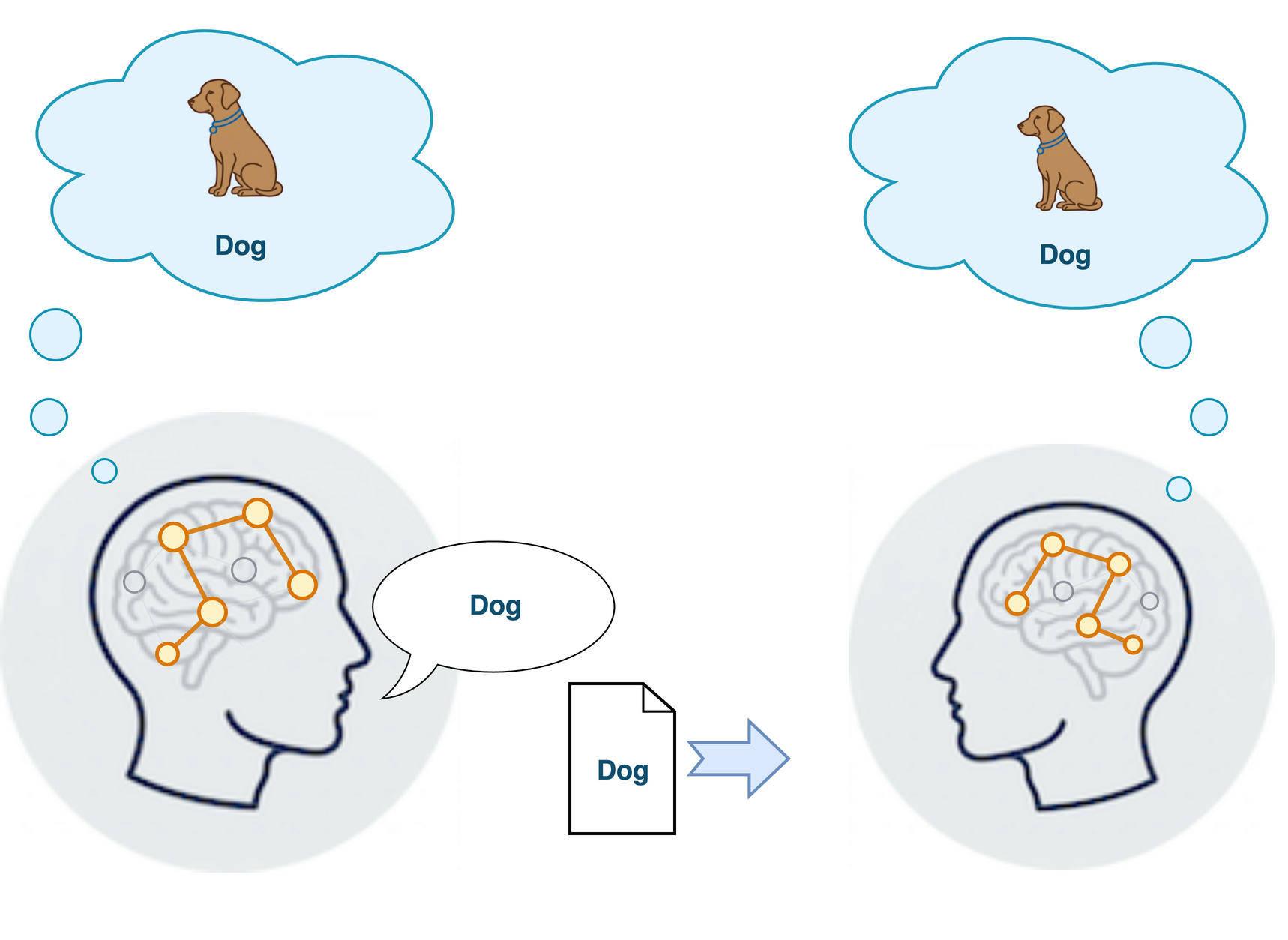

We can’t see each other’s “thought bubble”, or transmit messages via brainwaves, so we invented speech and text to transmit thoughts to one another. We “encode” our thoughts to a natural language when we speak or write, and the listener or reader “decodes” the language into their own thought.

Speakers of the same language have all agreed on how to encode and decode the language, so they can understand one another and share thoughts through language. Generally speaking, we learn a language by exposure to examples of it being used within a context where we have some understanding of what is going on. This allows us to associate words in the language to the related concepts we understand.

Through repeated exposure and more examples, we get a better grasp of the language. We can read or listen more easily, and understand more nuances of the thought encoded in the language. We also learn to “encode” thoughts to language to express ourselves more effectively. We become able to express a much broader range of thoughts, but also with finer shades of meaning. Other speakers of the language are better able to decode our intended meaning as we improve.

In other words, we are not born “programmed” to understand any specific natural language, we organically learn how it works from exposure, context and practice with feedback on correctness. Transformers organically learn to understand language and “how to think” through an analogous “training” process.

We design the architecture, the number and shapes and some technical details of layers in the transformer. But we don’t “program” it, or give it explicit rules on what to do for any input

Like thought, language also represents abstract concepts. Unlike our internal thoughts, language can transmit meaning from speaker to audience. We must learn the rules of a particular natural language, through exposure and practice, to:

- Encode our thoughts into language by writing or speaking in the language, and

- Decode the meaning in the language into our own thought representation by reading or listening to it.

1.3 How Transformers Think

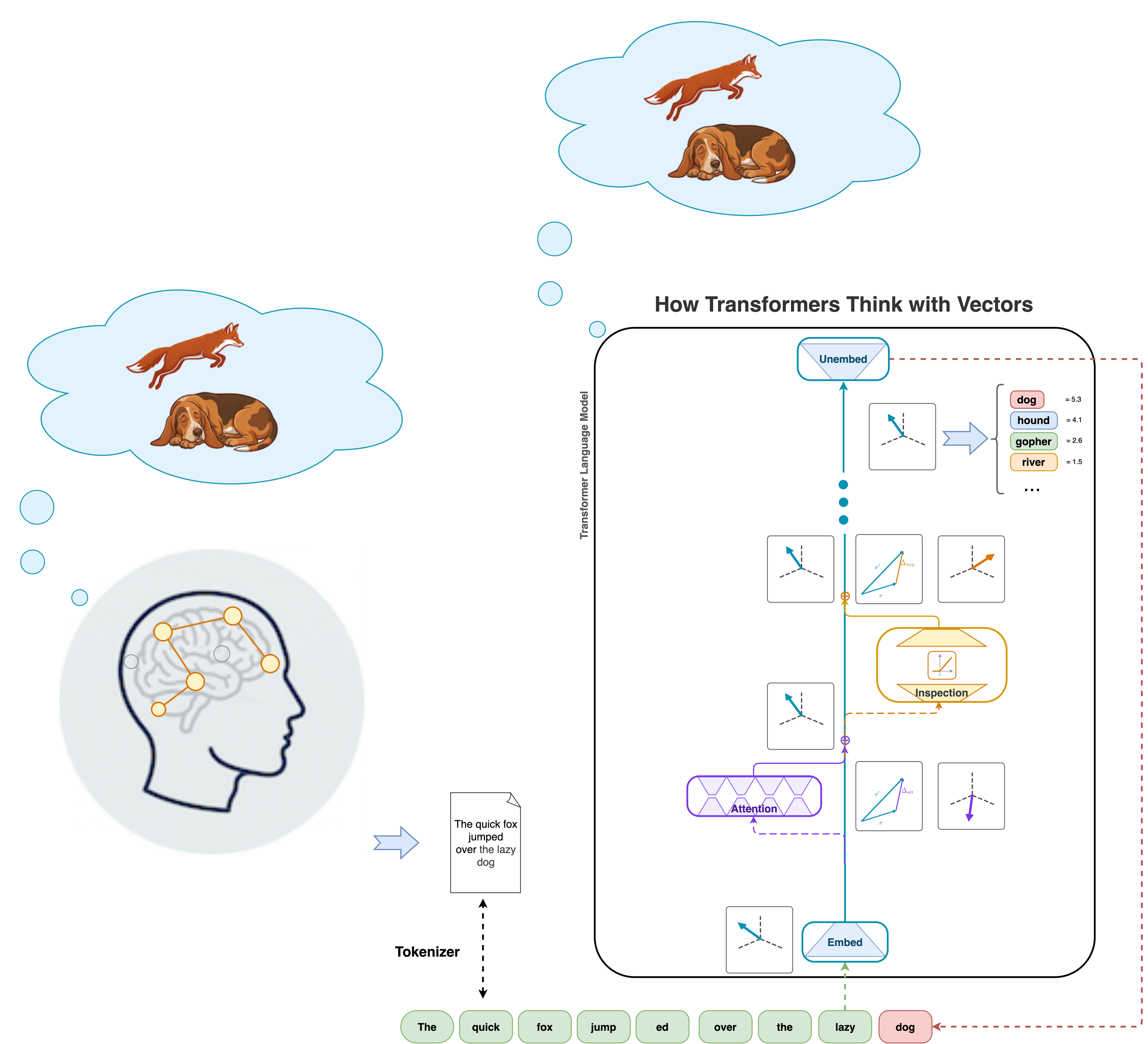

Transformers “think with vectors”. Whereas humans represent abstract thoughts as patterns of neurons firing, transformers represent “thoughts” as vectors. Specifically, transformers represent “thoughts” as vectors of real numbers in a high-dimensional space \(\mathbb{R}^n\) (for some \(n\), typically in the thousands). These vectors have nice properties that allow you to compose them in any mixture you like. This allows transformers to mix and change different concepts through matrix operations on these vector representations. The result of these operations is a refined “thought” representation, with added nuance and context.

The thought representation flows through many layers of the transformer, each one updating the represented meaning. The transformer has special encoder and decoder layers at the start and end. Between these, the transformer stacks many layers, each layer performs the same types of operations but with different learned parameters in each layer. That is to say, each operation is essentially one or two matrix multiplications. The corresponding matrices for the same operation in different layers always have the same size and shape, but each layer can and does have different numerical values in its matrix.

1.4 The Four Fundamental Operations

Remarkably, transformers perform just four fundamental types of operations involving “thought vectors”:

- Embed — Convert text into thought vectors. The embedding matrix performs a lookup, mapping each discrete token to a vector in the embedding space. Analogous to humans reading or hearing language.

- Attend — Identify relationships among different words in a passage, move information between word representations. The attention layer performs this operation.

- Inspect — Probe, filter, and update a thought vector. Usually called the multi-layer perceptron (MLP) or feed forward layer, I prefer to think of this as the “inspection” layer, thought it is commonnly called a multi-layer perceptron (MLP), feed-forward, or linear layer in the literature. This operation effectivelymagnifies the representation, completes a detailed inspection checklist, filters irrelevant information, and creates an update to add this information to the thought vector representation.

- Unembed — Convert the final thought vector back into text. The unembedding matrix maps from embedding space to a distribution over tokens, with larger numbers signifiying a better continuation of the text.

The first and last operations translate between human text and machine thought. All the “thinking” comes from repeatedly alternating attention and inspection operations, repeated across many layers.

1.5 Communicating with Transformers

In a macroscopic sense, the way we communicate with transformers through text is exactly how we communicate with each other via text. When we write we encode a specific meaning into text. Whether read by a human or a transformer, the reader decodes the text into their own internal representation of the underlying meaning. When we read, there is some latent semantic meaning reperesented by that text that we can decode into our own understanding, regardless of whether a human or transformer imbued that meaning into the text. Humans and transformers are on equal footing when it comes to working with and understanding natural language text.

This is a very dramatic change from the way we interact with machines through traditional software. To communicate with a machine via a programming language, there must be unambiguous rules for syntax and grammar. Any deviation from the constraints of the language creates invalid code that will crash the program. You must declare precisely what to do, step-by-step in low-level detail, and the program will mechanically follow these instructions for any valid code. Programmers invented many layers of programming languages, each abstracting away some low-level details of the previous layer, so that we can more easily tell the computer the precise low-level instructions expressed as statements in the higher-level language. This whole approach created significant technical barriers to communicating with machines and getting them to do what you want. It is needed to translate statements in a high-level programming language that trained developers can understand into low-level machine code that tells the computer exactly which bits to flip from 1 to 0 and vice versa.

Transformers and language models are an entirely different paradigm. Where a computer program is black-and-white, a transformer is all shades of gray. A transformer can generalize, extrapolate, or fill in gaps in the provided information. It can understand “what you meant” in the case of typos or syntax errors.

Text is the communication channel between humans and transformers. Both can read text and translate it into internal semantic representations. The representations take a different form — synapse patterns vs. floating-point vectors — but the function they serve is strikingly similar.

Text is the universal communication medium between humans and transformers.